|

Lost Tech Archive |

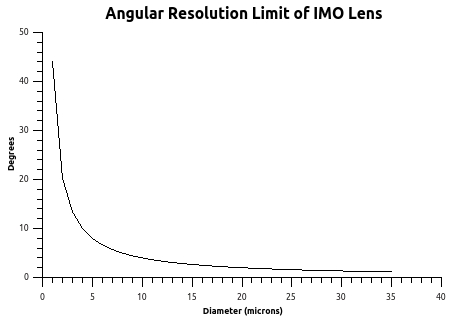

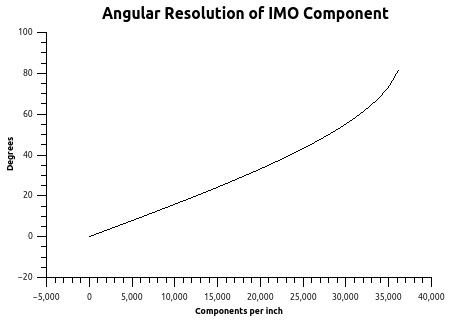

Traditionally, because of the techniques available and hence the size scale involved, it has been far simpler to design and manufacture imaging devices based on a serial arrangement of lensing components working on an image rather than a collection of components processing values of pixels in parallel. This preference has hidden the potential of IMO. Practical IMO systems use serial sets of components to generate only a point, or pixel, of the final image. To produce images of a quality comparable to existing imaging systems requires a density of elements - and hence an element size - that cannot be achieved using traditional techniques. The literature on Integral Photography (an early example of an IMO application) repeatedly refers to this problem of making elements of small enough size to achieve packing densities sufficient for high quality image production.

The situation is similar to having enough dots per inch in a halftone image to produce an image where the dots do not intrude on the perception of the image as a whole. In the case of IMO systems, however, each dot is an entire active subsystem. Limits on lens diameters have, in the past, precluded the small sizes needed for acceptable pixel densities for IMO planes. Furthermore, designing and manufacturing such systems is far more complex than the large curves and straight-forward layout of traditional optical imaging systems.

These severe limitations on the implementation of this technology have restricted its use to various relatively coarse 3 dimensional displays such as stereo panoramagrams and integral photography and in the well known novelty items employing lenticular screens that change between two or several images as the viewing angle is changed. All of these use arrays of simple refractive components. In particular, this limitation on implementation has hidden investigation into this technology as a general approach to imaging devices.

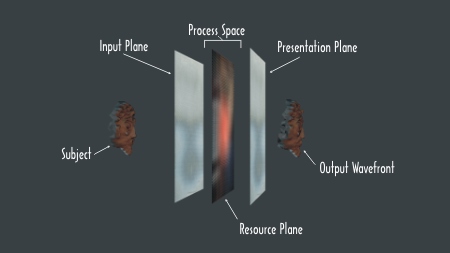

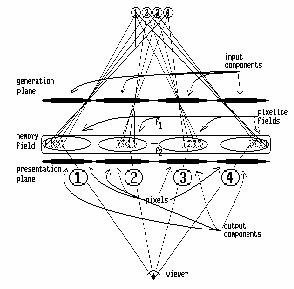

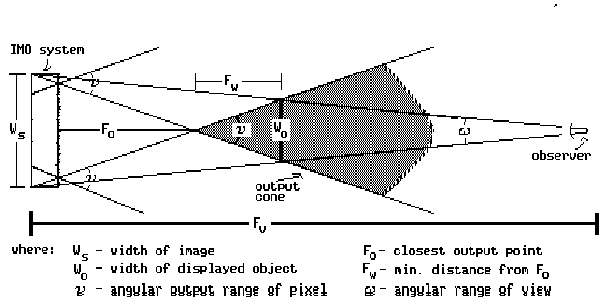

System ArchitectureRather than working with an image as a whole, IMO systems, like

bitmapped computer

graphic devices, treat images as an array of points. These points are

sampled, processed, and displayed as active pixels each encoding an

array of information. The system's 3 basic sections are: the Input

Plane, the Process Space, and the Presentation Plane:

figure 4.

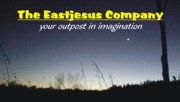

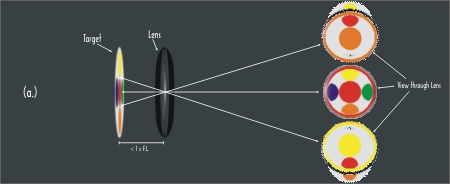

Magnifying Lens Operation

|

In (a.) at the left, a target is

less than 1 focal length of the

lens away from the lens. A viewer on the other side of the lens will

see a

magnified version of the target determined by the distances between

target, the lens, and the viewer. Note that the image seen by the

viewer is located on the same side of the lens as the target. |

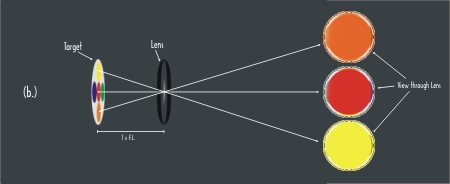

figure 5.

Pixel Output Operation

|

In (b.) at the left, the target

is exactly 1 focal length of the lens

away. In effect, the lens is providing infinite magnification and the

entire lens appears to have the color of the point on the target in

line from

the viewer through the center of the lens.The color of the lens will

change as the viewer moves because the point of the target selected

scans the target with the movement of the viewer. This is the primary

mode of

operation of the presentation plane. As the angle of the viewer

changes, the color of the output changes based on the data located on

the target in the resource plane. The illustration shows vertical

selection but full analog horizontal and vertical selection are

available. Thus, single point data for output based on viewing angle is

mapped 1 to 1 as x:y coordinates on the target. |

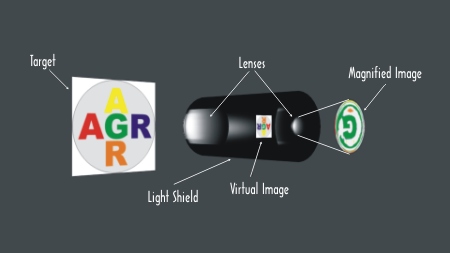

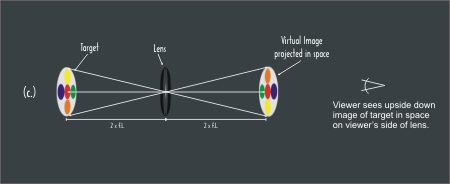

figure 6.

Virtual Image Generation

|

in (c.) at the left, the target is twice the focal length away from the lens and it forms a virtual image with a 1:1 relationship with the original but upside down in the space at twice the focal length on the other side of the lens. If the target is brought close to the lens, but not equal or less than the focal length, the virtual image will be magnified and appear forther away from the lens on the other side. Likewise, as the target is moved further than twice the focal length, the virtual image is smaller and moves toward the lens but never getting closer than the focal length. This modality is useful for capturing volumetric data by the Input Plane for further processing. |

figure

7. Zone Plate

|

Although lenses could be used if the pixel density isn't very high, as component size decreases it becomes difficult to manufacture lenses of sufficient quality. A simple alternative would be to use pinholes, as in a pinhole camera. Unfortunately, small holes by themselves are not sufficient to produce acceptable imagery. A larger pixel surface area and greater angular resolution are needed. Fresnel Zone Plates are flat components based on diffraction that will transform angular shading information in one plane into spatial shading information on another plane. That is basically the same effect that a lens produces when it focuses an image. Zone plates are essentially holograms of a point source of monochromatic light. The use of zone plates for focusing a light image dates back at least to Fresnel's presentation of the effect before a prize committee of the French Academy in 1818 and there are references to the effect at least a century earlier. Zone plates become simpler as their size decreases, their internal component geometries being determined by the wavelengths of the light involved. Additionally, the focal lengths of zone plates become very short as their size decreases allowing for short distances between serial components. Fresnel zone plates should not be

confused with Fresnel lenses (see Fresnel Lenses and Zone Plates.) Although zone plates will act as a lens, they are very inefficient when constructed to block wave components. Instead of blocking, a zone plate can be constructed to phase shift light components dramatically increasing image quality and efficiency. By varying the density by embossing a surface with the rings significantly increases the brightness transmitted by a component by shifting the phase of light components instead of blocking them. Being essentially a planar object as opposed to large curved surfaces, practical element densities up to several hundred elements per linear inch can be manufactured using available printing and embossing processes. Additional improvements in brightness and resolution can be achieved by overlapping the zone plates slightly so that some of the outer rings share the same area on the screen. It is important to note that the bands are wave phase adjustment patterns and the overlapping areas are comprised of recorded wave phase adjustment patterns and not just an overlapping set of rings. |

|

Zone Plate

Demonstration

figure 10. View from the camera in figure 8 as the Guckel zone plate is moved back and forth in the path of the light. |

| Chromatic aberration becomes a

significant issue at the sizes of components needed and there are

several ways of taking advantage of the effect. The simplest is to have

separate resource planes for each color. This lends itself well to

photographic applications which already use separate emulsions stacked

on each other for separate colors. Another approach, extensively used

in the graphics arts and video industries, is to restrict each pixel to

a single color and use groupings of pixels much like the RGB triads

used in color CRT's or color separations done in halftone printing. A

third approach would make use of color corrected zone plates. In this

approach, separate zone plates are designed to focus separate colors on

the same resource plane area. These zones plates are designed also as

filters and they are superimposed on the same center such that

different wavelengths interact with different components at the same

location resulting in all colors focusing at the same plane. This would

be similar to the subtractive color processes used in modern color

transparency films. Diffraction grating elements can be used if separation of wavelengths or color is specifically desired. In this case, the pixelite fields have mappings of color separations that are used by the presentation plane. This allows construction of images containing calibrated spectral graphs. Likewise, variations (derived by numerically generating a phase shifing or absorbing surface that performs the desired transform function) can be easily incorporated into portions of the image. Even greater improvements in resolution and color control could be achieved through the use of metamaterials by selectively controlling refractive index and wavelength interaction. |

figure

11. Color Corrected Zone Plate

|

{ Discuss off axis zone plates and other variations as specific instances of cuts through the wave pattern in space.}

Component Design

| Single

Pixel Output Component Basic design considerations for a single pixel output are presented. Typically, Fp = tp in most situations. It is important to take the refractive index of the media into account in determining the wavelength. |

An examination of the processing of a single pixel through an IMO system illustrates the functioning of the system:

![]()

figure

12. Single Pixel Processing in IMO System

Angular shading information (yellow, red, and orange in figure 12 above) at a component on the Input Plane is transformed into spatial shading information in the Pixelite Field on the Resource Plane by the IMO component on the Input Plane.

The resource plane is a planar mapping of angular shadings used by the presentation plane. Each component on the presentation plane has a corresponding area on the resource plane called the pixelite field which supplies it with shading information for all angles that it services. There is a discrete area in the pixelite field for every output angle of the component on the presentation plane. The pixelite fields may be generated live, can be pre-recorded, or can be some combination of the two.

Spatial shading information from the pixelite field on the resource plane is transformed into angular shading information by the component on the presentation plane. Since images are composed of points, each pixel need present only one shading value for any one viewing angle. If we think of the IMO component on the presentation plane as a hole in an opaque sheet and the pixelites on the resource plane as three different shadings behind it, then a viewer at a' would see the shading a" through the hole (the IMO component), while a viewer at b' would see the shading b", and c' would see c".

|

Evolution of Single Pixel Operation

figure 13. Basic operation of a single 1 dimensional pixel |

figure 14. Pixel operation expanded to 2 dimensions. |

figure 15. Pixel operation with lens replacing aperture.

|

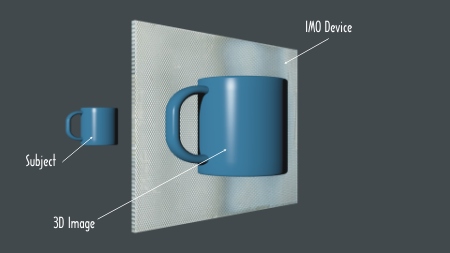

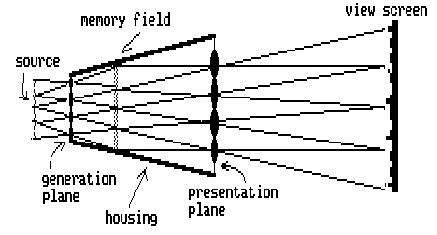

Realization

Practical IMO Systems consist of at least a resource plane and one other plane and may have more as well as other components depending on the application. The general principles can be seen if we examine a simplistic example of this technology.

In figure 16 above, there is a generation plane and presentation plane of four components each separated by some space with an object on one side and a viewer on the other. The four components of the generation plane each generate an image of the object in their corresponding pixelite fields. The components of the presentation plane are on the same centers as the generation plane components but the focal length of the components is shorter so that the distance from the pixelite field images to the presentation plane is shorter. The viewer sees a composite image composed of a set of points, or pixels, selected one from each pixelite field by each component of the presentation plane. Because of the difference in focal lengths of the two screens, the image is composed of pixels from a small area of the sampled object. By careful selection of the geometry of the screens, the apparent image as seen by the viewer is composed of a set of points that covers the entire field of view in a geometrically accurate manner. Thus, the system acts as a magnifier with the added advantage that full parallax and stereopsis is maintained throughout the field of view designed into the system. The reverse geometry would result in a wide angle viewer.

A practical device using this approach requires at least thirty and more likely one to three hundred components per linear inch to produce high quality images. To maintain separation of pixelite fields at that density, focal lengths of components must be in the 100 to 1000 micron range. If the generation and presentation plane components are made on the two sides of a sheet of transparent material, the resource plane images can be generated within the material and image magnifiers can be manufactured on a single continuous sheet.

Aliasing of sampled data is generally considered a problem by engineers. IMO systems rely on this phenomenon to create a large image out of a multitude of related small sample sets. In general, IMO systems use the generation plane to create a resource plane consisting of a multitude of pixelite fields that are the sample sets for the presentation plane. The presentation plane selects samples from this multitude of repeating images at a spatial frequency almost equal to the component spacing of the generation plane but increasing slightly as one moves off center. This results in a set of points selected by the viewer that recreates a geometrically accurate reconstruction of a single large image incorporating any transform designed into the system. By careful selection and placement of the components and relative geometries of the three planes, sample sets can be made available to the presentation plane allowing the generation of enlarged images of small areas, reduced images of large areas, superpositioning of prerecorded data with live images, spatial filtering, spectroscopic analysis, and many other applications.

Screen Design

Screens can be designed to have particular characteristics. Software has been created that will generate a description of a screen and component geometries allowing interactive changing of viewing distance, media thickness, size, refractive index, zone center diameter, pixels/inch, field of view, pixel spacing, and wavelength selection as well as variations on plotting devices. A more general approach, also implemented in software, involves calculating a raster image of a phase absorbing plane that performs the required transform. This data, derived by either method, is then plotted at the required size and manufactured in the desired transparent media. These sheets, called MicroOptic Sheetstm, built with standardized centers and thicknesses based on focal lengths, can then be stacked to create a multitude of imaging devices.

Although a rectilinear grid of components would create images

similar to the XY pixel arrays common to digital imaging, packing

alternate lines with hexagonal pixels would result in higher density

images. Arranging pixels in a random pattern eliminates the grid effect

of low resolution images, especially if the display is comprised of

successive video frames each with a different random pattern, but

requires data and input components to

align exactly with the random pattern used. Dynamically generated

components for the input and/or presentation planes along with matching

aligned data on the resource plane would make this practical.

For example, assuming light with a wavelength of 0.51 microns, a system for volumetric image reconstruction designed for viewing from at least 15 inches with a 30 degree angular viewing range could have the following geometry: A sheet of transparent material 20 inches square and 6 mils thick having a refractive index of 1.496 with the presentation plane embossed on one side and a photographic emulsion on the other side would have the pixel centers in triads with 82 micron separation between component centers. This would result in 312 pixels per linear inch. The components would be zone plates with a center zone diameter of 14.4 microns. The components would have 29 zones resulting in a component diameter of 77.53 microns.

Development Activities

(from 1985 through 1989)

Three phases of development have taken place to date. The first involved selecting potential approaches to implementing crude prototypes. A photographic approach was selected as the media of choice to produce images that could be used as screens and as a storage media for resource planes. Stored resource plane systems were selected for development because a single screen could be used for both the generation plane and the resource plane eliminating potential alignment and repeatability problems.

Early work was focused on creating a workable screen and many attempts were done before the distinctions necessary for even a rudimentary prototype were known. This early work used simplified zone plates consisting of only the center zone because these could be generated using available equipment. Pixel density was also very coarse. Various means of generating components were tried including punching holes, cementing acrylic beads and using them as lenses, dot matrix printing, and manual photographic step and repeat. The first working image was made with a screen generated on a CRT and photographed on 4x5 film, duplicated, pasted up, reduced, duplicated, pasted up, reduced, and again duplicated, pasted up and reduced. The resulting screen had center zones that varied widely in diameter and roundness, but nevertheless produced a working image.

Most of the early work involved imaging objects at a distance of many feet from the screen. These attempts were very unsuccessful primarily because of the crudity of the components. Turnaround time on an experiment was long because of the time involved in handling and processing the many photographic steps in each try. This time lag was eliminated by copying the screen and using a translucent sheet as the resource plane. This made it possible to view the resulting image live.

The first working image was of a rectangle of plastic with two holes backlit by a square aperture behind it. The plastic was taped to a pencil and placed approximately one inch in front of the plate. It was from the many multiple images off to the sides that the need for careful spacing and separation of pixelite fields became obvious.

The software for generating screens was modified to take this into account and a new screen was created that allowed sufficient spacing between pixels. This screen was used in creating the 3D image of the clown and goose and a multiplex image consisting of 13 separate images of arrows indicating the direction to move to find a plus sign image straight on. These screens had 9.6 pixels per linear inch and, because they only had the center zones, required bright illumination from behind. As can be seen from the photomicrographs, these center zones were far from round and varied from -10% to +500% of the desired diameters. Nevertheless, the resulting images are clearly recognizable and the dynamics of the system clearly function.

The need for greater brightness, greater pixel density, and greater

resolution was obvious and a method for creating a new screen was

investigated. Holographic and other optical methods were looked into

and ultimately dropped because of lack of access to equipment as well

as lack of versatility of design. A software approach to generating

data that described components and systems was adopted and the existing

design software was modified to generate image data files for the

Matrix QCR video output camera. Because the plotting dot size, spacing,

and frame size of the output of the camera were not at the level needed

for the screens, a means of doing step and repeat as well as

photo-reductions was sought. After many attempts in-house as well as at

several graphic arts houses and printer houses, this approach was

shelved to pursue generating a screen at the semiconductor fabrication

facility at the University of Wisconsin with the help of Professor

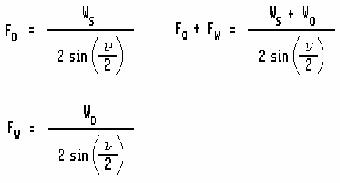

Henry Guckel. A screen was designed and

built that would use the glass plate substrate as a focusing medium.

Professor Guckel created a beautiful large zone plate metal on glass

using equipment used for creating integrated circuit components. This

master plate was reduced and duplicated using a step and repeat process

to create a new small array plate. Because of the thickness of the

plate (90 mils), the pixel density was

only at 25 pixels per linear inch, but because of the detail possible

(1 micron lines), the zone plate components had over 150 rings which

significantly increased the possible brightness and resolution.

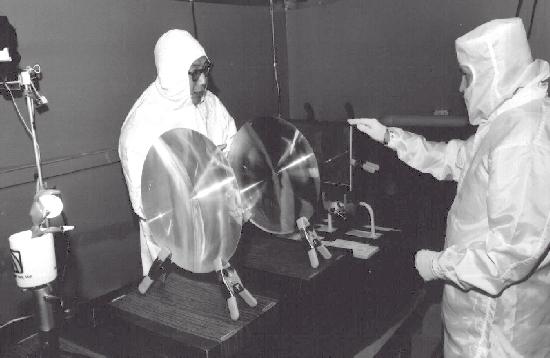

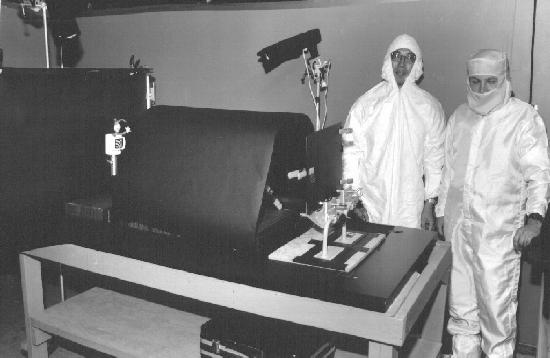

The handling of objects with that level of small detail required construction of a clean room environment and special jigs and fixtures for handling, alignment, and exposure as well as new techniques and equipment for analyses of experiments. Early work with this screen was very disappointing because of the lack of control over exposure, processing, and alignment. A course of study was undertaken to learn the dynamics of using this type of imagery. It was determined that zone plate arrays using components which worked by blocking light could not achieve better than about 10% efficiancy but arrays with phase shifting components could come close to 100% efficiancy. The master array was used to emboss the zone plates onto a plastic sheet to form a phase shifting zone plate array. Unfortunately, the geometry was wrong and the spill-through light between the lens elements washed out the useable data. A new design using this method with overlapping zone plates would result in spectacular increases in resolution and efficiancy as well assignificant decrease in cost of reproduction but has not been tried to date.

A 8" x 10" lens array from Fresnel Technologies was used in later experiments because it was readily available. Although it was compression molded acrylic and only had about 10 lenses per inch, after annealing to flatten the plate it was sufficiant to produce a long series of images for experimental as well as artistic and commercial use in the late 1980's.

figure 18. Lab prototype of the BFC (Big

Fucking Camera) approximately 1987 with the author on the right in both

pictures. The camera used an 8" x 10" film plate with a pinhole or lens

array to capture resource plane data onto photographic film. The large

lenses created a 3 dimensional virtual image of the scene

with sufficient angular range to fill the angular range of the resource

plane which was positioned to intersect the virtual image such that on

playback the recreated object could be positioned in relation to the

playback device. Later a full working prototype of the camera was built

with an enclosed exposure area with rack and pinion adjustments for

positioning the film plane and a service port for direct observation of

the virtual image.

figure 20. Two art images created using

the prototype BFC and played back using a lens array from Fresnel

Technologies.

The face was recorded such that the nose was at the surface of the

resource plane. This shows the image viewed from 5 different viewing

locations. Since the resulting image was pseudoscopic, the face appears

behind the plate with the nose pressed against it and rotates smoothly

tracking and following the viewer in all three dimensions as the

viewer moves about the room. The second image was a time exposure

created using

moving lights with filters and appears as a full solid 3 dimensional

sculpture with parts extending both beyond and in front of the display

surface.

figure 21. Animated GIF images showing

parallax with changes in viewing angle. The left set are of live faces

while the right set shows 4 separate art images created using colored

lights moved during a long exposure and the center image a gift

wrapping bow. All were created using the production BFC mentioned

earlier. Although only horizontal parallax is shown here the images

also have equal amounts of vertical parallax as can be seen in the

still pictures of the face in figure 20. Due to their 5 frame per

second display, these GIF images do not do justice to the extremely

smooth movement of the subject when viewing the actual actual images.

We have examined how a sheet magnifier can be built. This magnifier would be useful in medical applications not only for its optical properties but also as a spatter shield. Another application would be as a video screen magnifier, especially if the MicroOptic Sheets were designed to produce an interpolated image between the lines of the standard video signal, thus apparently doubling the resolution of standard video.

A slightly different configuration results in a MicroOptic projection lens. Each pixel in the system projects a single pixel on the screen. Figure 19 illustrates the arrangement required.

Note that the centers of the generation plane, the memory field, and the presentation plane are in line with the angle of enlargement, not purpendicular to the planes of the system. This is an application where specific presentation output angles are designed into the system to accomodate specific output requirements.

The pixels on CRT's and flat panel displays can be used to create the pixelites of a resource plane. With the appropriate presentation plane in place, live volumetric imaging of computer and video data becomes available.

If a light sensitized emulsion is placed at the resource plane, the image recorded, and then viewed through the presentation plane, a 3 dimensional image of the object is reconstructed for the viewer that demonstrates full parallax as the angle of view is changed. This, in fact, is what happens in integral photography using fly's eye lenses. IMO implementation would provide a quality hitherto not possible to this type of 3D imaging. From a manufacturing standpoint, the film could be made by taking a transparent sheet stock, printing or embossing the IMO pattern on one side, and coating the other with a light sensitive emulsion. With appropriate screens manufactured as part of photographic film, high quality 3D film can be manufactured for use in many applications not amenable to holographic techniques such as X-ray images, furnaces, plasmas, large displays, and portraits. Normally the generation and presentation planes would have the same focal lengths and since only one is used at a time, this allows the same screen to be used for both. However, if different focal length screens were used for recording and viewing, a magnified or wide angle image would result.

With suitable framing, windows with recorded views could be made. Along those same lines, floor tiles could be made with repeating patterns such as grass, water, (or hot coals or an aerial view!). Likewise, ceiling panels with sky, clouds, or whatever would add an openness to a room not possible with other building materials.

Each angle of view actually selects a separate image which does not have to be related to any other image. Many pages of print and graphics can be multiplexed onto a single IMO sheet and each page selected by viewing angle. Such devices could be made either to show an image by itself, or by leaving the material transparent, to superimpose an image onto a scene viewed through it. It would be practical to manufacture such devices by printing the multiplexed pattern on one side and embossing the screen on the other. Applications would include information retrieval systems (both directly viewable or projectable microfiche type sheets or in a format suitable for computer data storage), and displays that project pointers into space and display some measurement such as digital optical rangefinders or dashboard displays that can point out objects or areas forward of the vehicle. An interesting variation is the use of an elastic screen material programmed with a menu when viewed flat, but displaying images appropriate to the menu items selected by pushing on the item by a user.

Animation sequences can be created that are viewed by turning the sheet in any direction. A multiplexed image sheet can consist of a set of frames from an animation sequence. Applications would include advertising displays, signage, and perhaps animated highway displays, such as moving arrows that rely on viewer motion to sequence a set of images.

Spatial filters can also be implemented using this technique. A screen that blocks out a spatial frequency component can be incorporated in the resource plane and the resulting apparent image will thus be modified. The resource plane would be comprised of the desired pattern repeated over the entire surface aligned with the centers of the pixels of the generation and presentation planes. Complex filtering of this type can produce a range of products that can recognize and accentuate shapes viewed through them.

If an IMO sheet has a mirror as the resource plane, the system will act as a high resolution autocollimating sheet with a prismatic effect. These would act as reflectors or as novelty mirrors or, if made as a tile, a dance floor that surrounds each object between the viewer and the screen in a rainbow of color.

Zone plates can be created for use with x-rays or other electromagnetic frequencies using metal foils and other materials to control the phase of non-visible radiation on the input plane with a flourescent or digital sensor coupled to a visible light output at the resource plane. This would allow the creation of relatively simple and lightweight devices that could would provide much of the functionality of CAT and other 3D EM scanners in an inexpensive portable device. Having a computer process data gathered from the input plane to generate a visible light resource plane for 3D output opens many more exciting possibilities. Of course, combinations of these applications could also be created that would lend themselves to specific applications such as magnifying sensors with data displays and shape recognizers built in.Conclusions

We have examined the foundations of Integral MicroOptics as well as some of the reasons why it has not been available to date. Implementation is now straightforward using techniques available today. Magnifiers, viewers, 3D recording and playback of images and data, and optical processing devices can be built using the techniques described that have advantages over other methods. This technology has many parallels to the semiconductor industry, but is simpler in execution while at the same time as rich in applications. Many variations are possible and speculation can best be understood in the context of someone looking at the future of electronics in 1958.

If you are interested in further information, have questions, comments, or would like to use this material

please contact the author.

Bibliography

Integral MicroOptics, Ivars Vilums,

LookingGlass Technology, Madison, Wisconsin, Internal Document, 1986

Optical Imaging System Using Tone-Plate Elements , US patent 4,878,735 Ivars Vilums. Filed Jan. 15, 1988, Ser. No. 144,942 Int. Cl. G02B 27/22, 27/44. U.S. Cl.350--131. 52 claims.

Optical Properties of a Lippmann Lenticulated Sheet, Herbert E. Ives, Bell Telephone Laboratories N.Y., Journal of the Optical Society of America. vol. 21, March, 1931, pages 171-176.

Synthesis of Fresnel Diffraction Patterns by Overlapping Zone Plates, R.L. Conger, L.T. Long, and J.A. Parks, Naval Weapons Center, Corona, California, Applied Optics, Vol. 7, No. 4, April, 1968, pages 623-624.

The Amateur Scientist: The Pleasures of the Pinhole Camera and its Relative the Pinspeck Camera, Jearl Walker, Scientific American,

Optimum Parameters and Resolution Limitation of Integral Photography, C.B. Burckhardt, Bell Telephone Laboratories, Inc., Murray Hill, N.J., Journal of the Optical Society of America, vol. 58, no. 1, January, 1968, pages 71-76.

Formation and Inversion of Pseudoscopic Images, C.B.Burckhardt, R.J. Collier, and E.T. Doherty, Bell Telephone Laboratories, Inc., Murray Hill, N.J., Applied Optics, vol. 7, no. 3, April, 1968, pages 627-631.

The Effect of Semiconductor Processing Upon the Focusing Properties of Fresnel Zone Plates Used As Alignment Targets, J.M. Lavine, M.T. Mason, D.R. Beaulieu, GCA Corporation, IC Systems Group, Bedford, MA., Internal Document.

Obtaining a Portrait of a Person by the Integral Photography Method, Yu. A. Dudnikov, B.K. Rozhkov, E.N. Antipova, Soviet Journal of Optical Technology, vol. 47, no. 9, September, 1980, pages 562-563.

Holography and Other 3D Techniques: Actual Developments and Impact on Business, Jean-Louis Tribillon, Research and Development, Holo-Laser, Paris, France, Conference Title: ThreeDimensional Imaging. Conference Location: Geneva, Switz. Conference Date: 1983 Apr 2122, Sponsor: SPIE, Bellingham, Wash, USA; Assoc Natl de la Recherche Technique, Paris, Fr; Assoc Elettrotecnica ed Elettronica Italiana, Milan, Italy; BattelleGeneva Research Cent, Geneva, Switz; Comite Francais d'Optique, Fr; et al. Source: Proceedings of SPIE The International Society for Optical Engineering v 402. Publ by SPIE, Bellingham, Wash, USA p 1318 1983 CODEN: PSISDG ISSN: 0277786X ISBN: 0892524375 E.I. Conference No.: 04122

An Analysis of 3-D Display Strategies, Thomas F. Budinger, Dept. of Electrical Engineering & Computer Sciences and Donner Laboratory, University of California, Berkeley, Ca., SPIE vol 507, Processing and Display of Three-Dimensional Data II (1984), pages 2-8.

Space Bandwidth Requirements for Three-Dimensional Imagery, E.N. Leith, University of Michigan, Ann Arbor, MI., Applied Optics, vol. 10, no. 11, November, 1971, pages 2419-2422.

Holography and Integral Photography, Robert J. Collier, Bell Telephone Laboratories, Physics Today, July, 1968, pages 54-63.

Two Modes of Operation of a Lens Array for Obtaining Integral Photography, N.K. Ignat'ev, Soviet Journal of Optical Technology, vol. 50, no. 1, January, 1983, pages 6-8.

Limiting Capabilities of Photographing Various Subjects by the Integral Photography Method, Yu. A. Dudnikov and B.K. Rozhkov, Soviet Journal of Optical Technology, vol. 46, no. 12, December, 1979, pages 736-738.

Wide-Angle Integral Photography - The Integram (tm) System, Roger L. de Montebello, SPIE, vol. 120, Three-Dimensional Imaging(1977), pages 73-91.

Through a Lenslet Brightly: Integral Photography, Scientific American, vol. 219, September, 1968, page 91.

The Applications of Holography, Henry John Caulfield (Sperry Rand Research Center, Sudbury, MA) and Sun Lu (Texas Instruments, Inc., Dallas, Tx.), Wiley Series in Pure and Applied Optics, 1970, Library of Congress Catalogue Card Number: 77-107585, SBN 471 14080 5